The Ramblr Data Engine, which combines low-level annotations with natural language instructions, generates training data tailored for domain-specific tasks, and minimizes the need for prompt engineering or manual data creation.

Abstract

Instruction tuning has advanced the capabilities of vision–language models (VLMs), yet current progress is largely measured on broad, general-purpose benchmarks. In practice, these models often struggle on domain-specific tasks that require specific and consistent expert knowledge. A key barrier is the lack of instruction dataset generation methods that is both scalable and produces high-quality visually grounded data. The Ramblr Data Engine solves these problems by combining low-level annotations with natural language instructions. It generates training data tailored for domain-specific tasks, minimizing the need for prompt engineering or manual data creation. By decoupling video annotations and text labels, the system can automatically generate captions, question-answer pairs and object-referring instructions from the same video labels, allowing rapid iteration and reuse of the data for different generation tasks.

Introduction

The ability of multimodal systems to generalize across domains depends not only on scale but also on the quality of supervision. Current vision–language models (VLMs) are typically trained on web-scale text–image pairs or handcrafted prompts where the former lacks quality and the latter lacks scalability [1]. This leads to limited grounding for fine-grained understanding of the visual context. Additionally, while VLMs perform well on general-purpose evaluations, they underperform on domain-specific tasks, where success requires fine-grained understanding of object attributes, spatial configuration, activity structure and process understanding [2, 3, 4].

Recent work has attempted to address these challenges by converting structured annotations into instruction-style datasets. For example, GeoChat [4] leverages attributes and relationships in remote sensing imagery to generate instruction dataset for region captioning, visual question answering, scene classification and object referring; SVIT [5] repurposes Visual Genome [6] and COCO annotations into GPT-4–driven instruction–response pairs; Osprey [7] curates large-scale “mask–text” datasets from segmentation benchmarks. While impactful, these pipelines are either research prototypes, restricted to specific domains, or require significant manual curation. Moreover, they rely on existing annotated datasets rather than supporting the annotation process itself, limiting their applicability to new domains where curated datasets do not yet exist.

To address these limitations, we propose Instruction Generation using the Ramblr Data Engine, which transforms standard visual annotations into natural language instructions. By leveraging masks, objects, attributes, relations and activities, the framework systematically generates instruction–response pairs aligned with the tasks that a given VLM is expected to solve. Unlike prior pipelines, which typically rely on pre-existing annotated datasets and treat labels as an endpoint, our method provides an end-to-end approach: annotations are created within the platform and directly repurposed for instruction synthesis, seamlessly linking annotation with downstream fine-tuning. Experiments show that Ramblr-generated instruction datasets consistently improve VLM performance not only on general benchmarks, but also on domain-specific evaluations in visual question answering, multiple-choice reasoning, captioning and specialized visual understanding tasks.

Methods

The instruction set generation (ISG) framework is implemented as part of the Ramblr Data Engine and makes use of the set of video annotations resulting from semi-automated, or fully automated annotation pipelines. This tutorial demonstrates how one can annotate videos using the Ramblr Data Engine. Based on those labels, a configurable set of visual-question answering instructions and conversations can be synthesized (Captioning, Multiple Choice, Free Form, Process Understanding, Spatial Awareness and Temporal Awareness). Each instruction type was defined through a task-specific template and generation rules, such as the available annotation context for the synthesis, allowing a configurable difficulty level and adaptation to different dataset structures or supervision schemes.

The LLM-based instruction sets and conversations were generated using a well-calibrated and robust agentic framework. The generation process is as follows:

- Annotation extraction – Annotations are pulled from the selected videos and the defined annotation-context, and converted into a structured text format. This format encodes detailed information about objects, their spatial relations, activities, locations (bounding boxes), and other relevant attributes.

- Context preparation – The text representation is passed to the LLM agent along with a user-provided prompt. The agent is internally configured to align with the prompt while producing diverse and semantically rich instructions of different types.

- Instruction generation – For each frame or clip, the LLM can generate multiple instructions. It introduces augmentations and variations while operating within strict guardrails to minimize hallucinations.

- Final output – The resulting instructions are converted into the required VLM-ready format, ensuring compatibility for instruction tuning.

Results

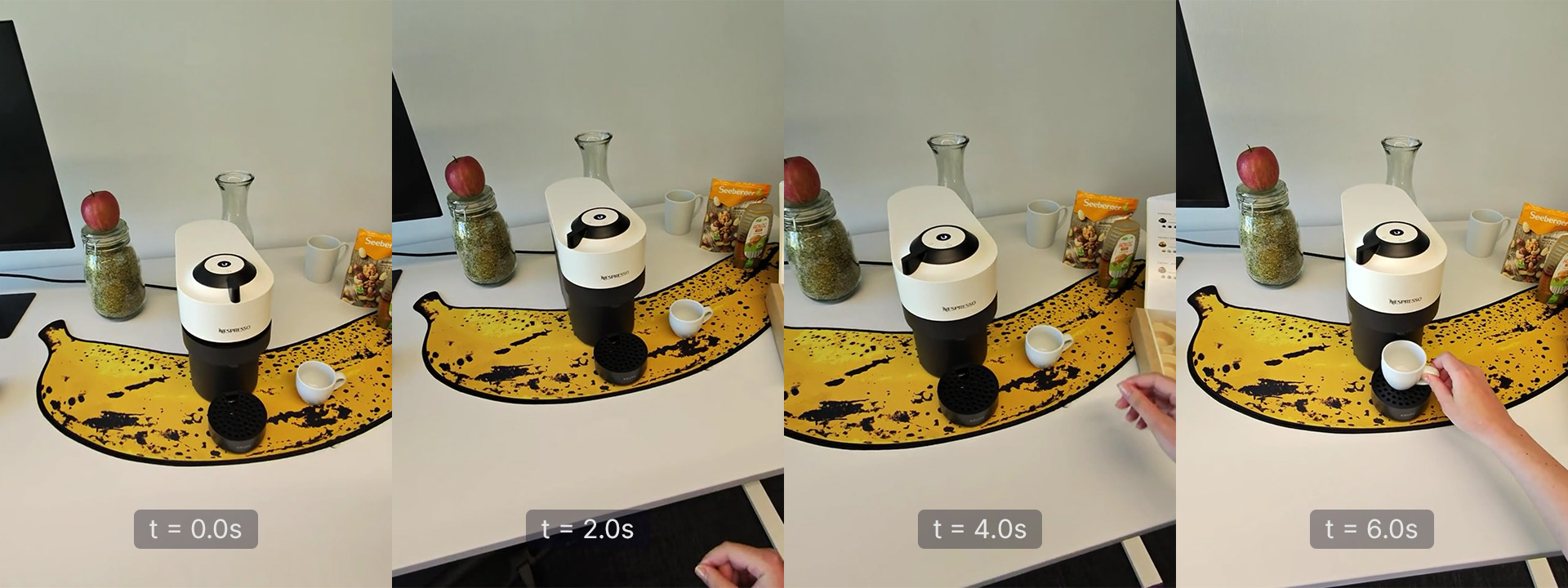

The ISG framework was tested on the Coffee-Making 101 dataset, which depicts the use of various coffee machines in different environments. We implemented instruction types with varying visual-input contexts (single- vs. multi-frame / video clips), as well as different levels of perception tasks to demonstrate the capabilities of the framework. For multi-frame settings, users can specify frame ranges and temporal strides to control the temporal context included in each instruction. This design enables the generation of data that captures both static and dynamic visual information, facilitating the creation of diverse datasets for training and evaluation of vision-language models.

The diagram below presents a streamlined workflow for generating instruction sets and conversation pairs to support the tuning of vision–language models (VLMs). When applied to the coffee-making frame sequence (Figure 2), the process begins with annotated elements – such as the presence of a coffee machine, a hand, and a cup – along with their spatial and functional relationships. These include the hand holding the cup, the button positioned on top of the machine, the machine being in a closed state, and the action of placing the cup beneath it. Together, these annotations provide a rich, text-based context for the language model (LLM).

Figure 1: Workflow illustrating how structured visual annotations are transformed into instruction sets.

The LLM is then prompted with a variety of instruction types, including generating captions to describe the sequence and producing diverse visual question–answer pairs (Figure 2; type examples shown: Captioning, Multiple Choice, Free Form, Process Understanding). Instruction generation is guided by a combination of internal system prompts and user-configured prompts, which help align the output with specific user needs. Internal guardrails ensure that the instructions remain focused and relevant. Users can also control parameters such as sequence length and stride to fine-tune the granularity of the generated content. Furthermore, when a reference process is provided, the LLM can generate structured, process-focused instructions that coherently describe or guide the entire coffee-making procedure.

Figure 2: Input frames from a coffee-making video sampled at 1 frame per second (fps). The resultant instruction types (Captioning, Multiple Choice, Free Form, Process Understanding) are shown below.

For this sequence, the instruction generation produced:

Captioning

Question: Can you summarize the activity in the video by generating a factual caption related to the coffee-making process?

Response: A right hand grabs a small coffee cup and places it under the spout of a capsule machine, preparing for coffee extraction.

Multiple Choice Question

Question: What action does the right hand perform during the coffee-making process in this clip?

- Presses the button on the coffee machine.

- Places the coffee cup under the machine spout.

- Holds the handle of the coffee machine.

- Moves the capsule machine.

Response: B. Places the coffee cup under the machine spout.

Free-form Questioning

Question: Which hand interacts with the coffee cup?

Response: The right hand.

Temporal Understanding Question

Question: Which step is occurring in the coffee-making process, and what should happen next?

Response: The right hand is placing a coffee cup under the coffee machine. The next step should be to press the button on top of the machine to start the extraction process.

Alongside these capabilities, the synthesis also supports the generation of spatially aware instruction sets—such as prompts to locate specific objects within a scene or to describe particular regions of an image. It further enables temporally aware instructions, for example, asking at which timestamp a specific action occurs. The Ramblr Data Engine is designed to produce a wide variety of instruction types and can generate multiple variations of each, tailored to the user’s configuration.

For instance, if the LLM is prompted to generate five instruction types for a given frame annotation, (such as Captioning, Free-form QA, Multiple Choice QA, Temporal Process Understanding, and Spatial Grounding) and is configured to produce five variations per type, this results in 25 unique instructions from a single frame annotation. This flexibility and scalability empower users to achieve significantly higher output, often 25 times or more instruction sets and conversation pairs, with the same level of effort.

Conclusion

Our framework demonstrates that structured annotations can be transformed into instruction-rich datasets for VLM training. The framework results demonstrate that existing annotation workflows can be extended beyond detection or segmentation to serve as a source of diverse and semantically meaningful training data. Future work will explore extending the framework to additional modalities such as 3D perception and audio, as well as integrating active learning to adaptively expand instruction diversity and target model limitations.

References

[1] Zhang, Q. (2024). Generalizable Prompt Tuning for Vision-Language Models. arXiv preprint arXiv:2410.03189.

[2] Peng, W., Xie, S., You, Z., Lan, S., & Wu, Z. (2024). Synthesize, Diagnose, and Optimize: Towards Fine-Grained Vision-Language Understanding. arXiv preprint arXiv:2312.00081.

[3] Khemlani, S., Tran, T., Gyory, N., Harrison, A. M., Lawson, W. E., Thielstrom, R., Thompson, H., Singh, T., & Trafton, J. G. (2025). Vision language models are unreliable at trivial spatial cognition. arXiv preprint arXiv:2504.16061.

[4] Kuckreja, K., Danish, M. S., Naseer, M., Das, A., Khan, S., & Khan, F. S. (2023). GeoChat: Grounded large vision–language model for remote sensing. arXiv preprint arXiv:2311.15826.

[5] Zhao, B., Wu, B., He, M., & Huang, T. (2023). SVIT: Scaling up Visual Instruction Tuning. arXiv preprint arXiv:2307.04087.

[6] Krishna, R., Zhu, Y., Groth, O., Johnson, J., Hata, K., Kravitz, J., Chen, S., Kalantidis, Y., Li, L., Shamma, D. A., Bernstein, M. S., & Li, F. F. (2016). Visual Genome: Connecting language and vision using crowdsourced dense image annotations. arXiv preprint arXiv:1602.07332.

[7] Yuan, Y., Li, W., Liu, J., Tang, D., Luo, X., Qin, C., Zhang, L., & Zhu, J. (2023). Osprey: Pixel Understanding with Visual Instruction Tuning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2024).